Exhibitions

CodeX

OnSite Gallery

Toronto, Canada

Conferences

ACADIA 2020

Publications

Distributed Proximities: Proceedings of the 2021 ACADIA Conference

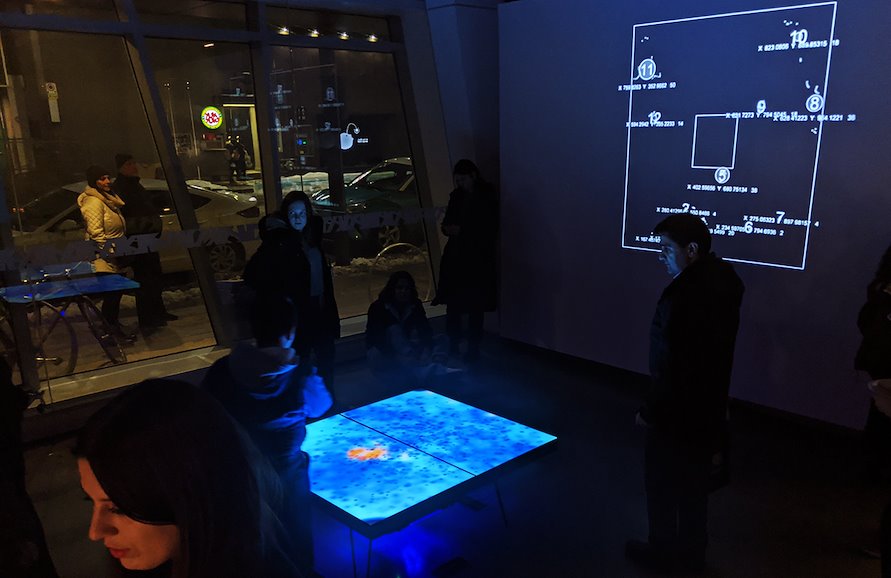

Pulse v2 continues research into how multiple modes of representation can affect our understanding of machine vision systems and the way they “see” us. The second iteration of this work uses a 2d lidar scanner to generate a continuous pointcloud of the gallery space in real time. The scanner is placed 20cm off of the floor under the main screen, but it has been taught to interpret this data into the positions of people in the room. The data is then displayed in two ways: A wall projection which shows what the machine sees and a more abstract system on the screen. The main display filters the data into a system of roughly 20,000 particles running verlet physics and a custom shader that creates a 2d organism that interacts based on the position and number of visitors in the space.